OSB, Service Callouts and OQL - Part 3

In the previous sections of the "OSB, Service Callouts and OQL" series, we analyzed the threading model used by OSB for Service Callouts and analysis of OSB Server threads hung in Service callouts and identifying the Proxies and Remote services involved in the hang using OQL.

This final section of the series will focus on the corrective action to avoid Service Callout related OSB serer hangs. Before we dive into the solution, we need to briefly discus about Work Managers in WLS.

WLS Work Managers

WLS version 9 and newer releases use a concept of Work Managers (WM) and self-tuning of threads to schedule and execute server requests (internal or external). All WLS server (ExecuteThread) threads are held in a global self-tuning thread pool and request are associated with WMs. The threads in the global thread pool can grow or shrink based on some inbuilt monitoring and heuristics (grow when requests are piling up over a period of time or shrink when threads are sitting idle for long etc.) Once the request is finished, the threads go back to the global self-tuning pool. The Work Manager is a concept to associate a request with some scheduling policy and not a thread. There is a "default" WM in WLS that is automatically created. A copy or template of the "default" WM will be used for all deployed applications, by default, out of the box.

There are no dedicated threads associated with any WM. If an application decides to use a custom (non-default) WM, the requests meant for that application (like Webapp Servlet requests or EJB or MDB) will be scheduled based on its WM policies. The association of Work Manager with an application is via wl-dispatch-policy in the application descriptor. Multiple different applications can use a copy of Work Manager policy (each inherit the policies associated with that WM) or refer to their own custom WMs.

Within a given Work Manager, there are options like Min-Thread and Max-Thread Constraints. Explaining the whole WM concept and the various constraints is beyond the scope of this blog entry. Please refer to Workload Management in WebLogic Server white paper on WMs and Thread Constraints in Work Managers blog post to understand more on Constraints and WMs. We will later refer to Custom WMs and Min-Thread Constraint for our particular problem.

Suffice to say, use Custom Work Manager and Min-Thread Constraint (set to real low value, say 3 or 5) for a given application only under rare circumstances to avoid thread starvation issues like incoming requests requiring additional server thread to complete (as in case of Service callout requiring additional thread to complete the response notification) or loop-backs of requests (AppA makes outbound call which again lands on same server as new requests for AppB); using Min-Threads excessively can cause too many threads or inversion of priority as mentioned in the previously referred blog posting. Use Custom WM with Max-Thread Constraint only in case of MDBs (to increase number of MDB instances processing messages in parallel).

Corrective Actions for handling Service Callouts

Now that we have seen (or detected) how Service Callouts can contribute to Stuck threads and thread starvation, there are solutions that can be implemented to make OSB gracefully recover from such situations.

1) Ensure the remote Backend Services invoked via Service callouts or Publish can scale under higher loads and still maintain response SLAs. Using the heap dump analysis, identify the remote services involved and improve their scalability and performance.

2) Use Route action whenever possible instead of the Service Callout when the actual invocation for a proxy is a single service and not multiple, and can be implemented using simple Route. Avoid Service callouts for calling co-located services that only do simple transformations or logging. Just invoke them as replace/insert/rename/log actions directly instead of using Service Callouts to achive the same result.

3) Protect OSB from thread starvation due to excessive usage of Service Callouts under load. The actual response handling is handled by an additional thread (Thread T2 in the Service callout implementation image) for a very short duration and it just notifies the Proxy thread waiting on the Service callout response.

Now this is one good match for applying a custom Work Manager with Min-Thread Constraint we discussed earlier as there is a requirement for additional thread to complete a given request (a bit of loop-back). For the remote Business Service definition that is invoked via Service Callouts, we can associate a Custom Work Manager with Min-Thread constraint so the response handling part (T2) can use a thread to get scheduled right away due to the Min-Thread Constraint (as long as we have not hit the Min-Thread constraint) instead of waiting to be scheduled. Since the thread is really used just for a real short duration, its the right fit for our situation. The thread can pick the Service Callout response of the remote service from the native Muxer layer when the response is ready and then immediately notify the waiting Service Callout thread before returning to work on the next Service Callout response or go back to the global self-tuning pool. This will ensure there are no thread starvation issues with the Service Callout pattern under high loads.

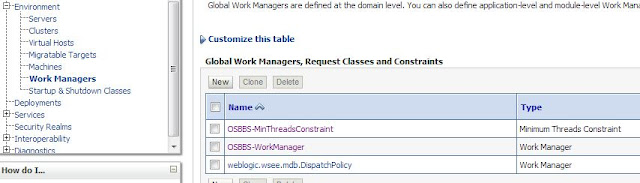

Custom Work Managers and Min-Thread Constraint

Create a custom Work Manager in WLS with a low Min Thread Constraint (less than or max 5) via the WLS Console.

Login to the WLS Console and expand the Environment node to select the Work Managers.

Start with creation of a Min Thread Constraint.

Select the Count to be 5 (or less).

Target the Constraint to the relevant servers (or OSB Cluster).

Next create a new Work Manager.

Next associate the Work Manager with the previously created Min Thread Constraint.

Leave the rest as empty (Max Thread Constraint/Capacity Constraint).

Note: The Server instance would have to be restarted to pick the changes.

OSB Business Service with Custom Work Manager

Login to the sbconsole.

Create a Session

Go to the related Business Service configuration.

Edit the HTTP Transport Configuration

Select the newly created custom Work Manager for the Dispatch Policy

Save changes.

Commit the session changes

The same custom Work Manager can be used by multiple Business Services that are all invoked via Service Callout actions as the thread would be used for a very short duration and can handle responses for multiple business services. Associate the dispatch policy of the Business services invoked via Service Callouts to use the custom WM.

Summary

Hope this series gave some pointers on the internal implementation of OSB for Route Vs. Service Callouts, correct usage of Service Callouts, identifying issues with callouts using Thread Dump and Heap Dump Analysis and the solutions to resolve them.

Hi Sabha, very good article. I have one question related with the amount of thread that will be use by the business service. When a service call out is made to a business service how many threads are used by the WM? only one? the T2? or are used two threads? one that makes the call to the business service and the other one that wait for the response(T2)

ReplyDeletethank you very much,

regards

The thread invoking the service callout actually waits till its gets a notification from callback thread that notifies it. So, for most part, its a single thread in use but during the actual response notification, two threads are used (its a real short duration). From the sense of the business service itself, it will actually use just 1 thread at any time (one sending the request out and another that picks the response and notifies the waiting service caller).

ReplyDeleteIts a bit like a 4x100 metres relay race where only one runner is actually active at any time except for the short duration when the baton has to be passed from one to the next runner.

This comment has been removed by the author.

ReplyDeleteHi Sabha, thank you very much for your response but I'm a little confuse about when WM applies for proxy and when WM applies for Business service. Example if I have a PS that makes a service call out how many threads will be used by the proxy service and how many will be used by the business service, thanks in advance,

ReplyDeleteregards

This comment has been removed by the author.

DeleteBy default, the default WM would be used by the PS and the BS response handling part. My recommendation is to continue to use the default WM for the proxy/service callout invocation but use a different WM for the business response handling. So, if there are N service callouts, there would be N threads waiting for response for the ProxyWM but just 1 or 2 threads will be used for the BusinessResponseWM as all service callout responses don't necessarily come in at the same time and even if they do get received same time, the BS WM's associated thread itself just only has to notify the waiting Proxy and return immediately, to handle the next available callout response.

DeleteHi Sabha, great explanation, now I'm clear how it works, great,

ReplyDeleteRegards

Hi Sabha,

ReplyDeleteFirst, let me thank you for this series of greats article, it's not often you can get documentation on OSB with that level of expertise!

It might be interesting to add a few lines on what happens when you call another Proxy Service using the local transport. OSB lacks the ability to "factorize" code: If you want to have a kind of generic handling for your errors for example, you have to replicate the same piece of message flow in all your proxy services, or you can use service callouts to invoke a local proxy like you would invoke a function in java. It seems that you don't recommend that according to the solution 2) you propose. I understand this from the optimization standpoint, but the alternative (duplicating over and over the same series of action) is not really an option in terms of code maintenance I think.

So I have 2 questions:

What is your opinion on that matter?

If the service called by the Service Callout is a local proxy service, is the thread managed you described the same?

Thank you again for your articles.

Hi Ben,

DeleteIrrespective of the nature of the service being called (yet another local proxy or a true remote business service), the service callout will always use two threads as mentioned in the post.

Yes, local proxy is the only option where you need to reuse the same logic. If possible, try to use route directly rather than service callouts.

So in the case where we are calling a local proxy via Service Callout, what WM would be used for the response thread processing? Is it the WM of the business service which is routed to by the local proxy?

DeleteUnfortunately, Local proxies don't allow dispatch policy. So, no custom WM can be assigned to it. But if the Local proxy in turn is invoking yet another Business service, then you can assign the Custom WM (with min Thread constraint) to it and then the response gets handled by without hitting thread starvation issues.

DeleteThis comment has been removed by the author.

ReplyDeleteThis comment has been removed by the author.

ReplyDeleteHoff & Mazor stands out among custom iPhone app development companies by transforming unique ideas into powerful mobile solutions! Your team built our restaurant app with custom loyalty features that boosted repeat business by 40%. When you need custom iPhone app development companies that truly understand your vision, Hoff & Mazor delivers exceptional tailored results.

ReplyDelete